Vibe Coding Support

- Vibe coding lets anyone generate working software through natural language prompts, but the code it produces often carries hidden security vulnerabilities, maintainability problems, and architectural debt that surface only after deployment.

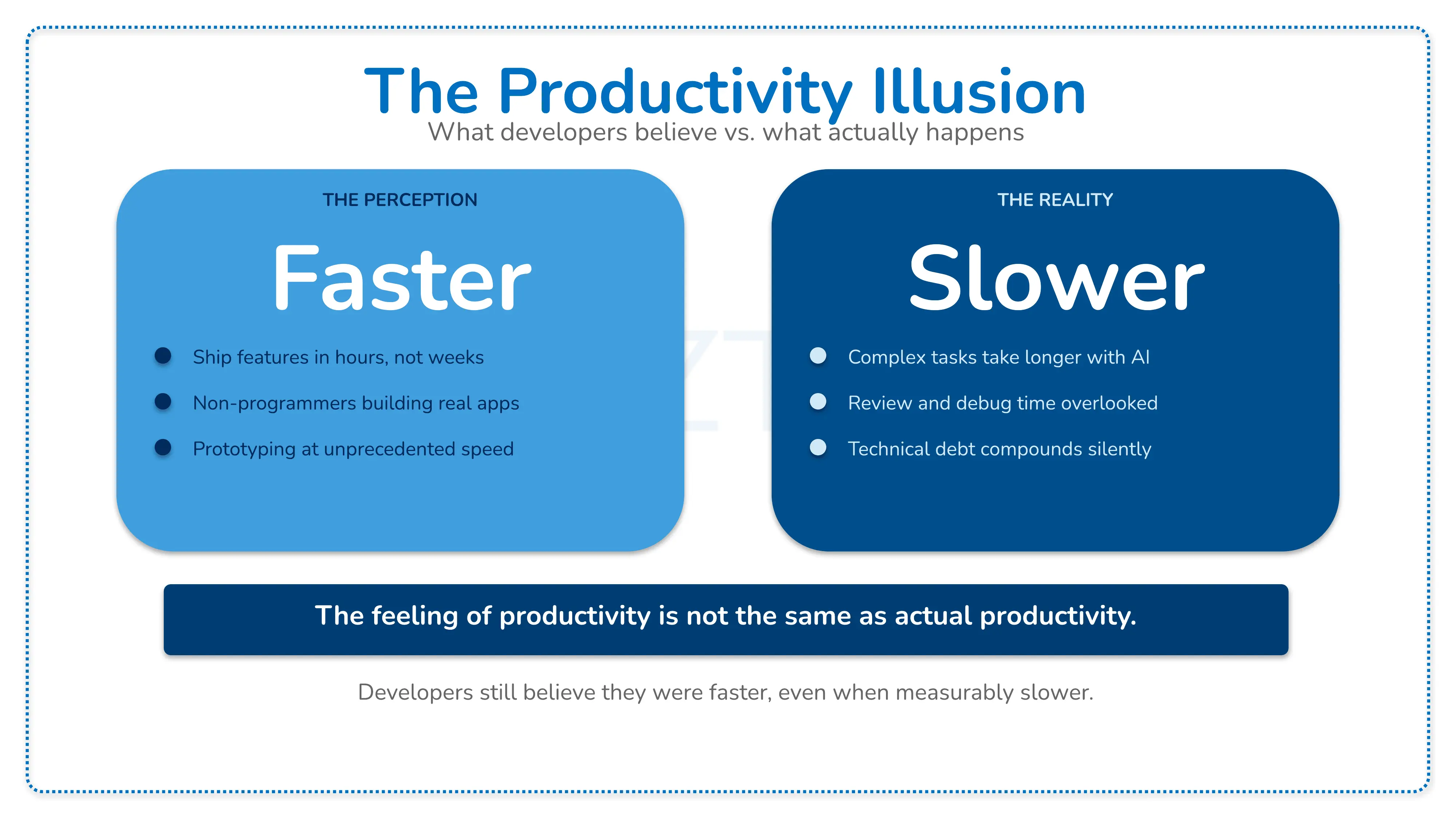

- Your organization needs to understand the full landscape of AI coding tools, from GitHub Copilot and Cursor to Claude Code, OpenAI Codex, and OpenClaw, because each operates differently and carries distinct risks for enterprise use.

- AI co-authored code shows elevated rates of logic errors, misconfigurations, and security vulnerabilities compared to human-written code, making unreviewed vibe-coded output a liability in production systems.

- A practical risk taxonomy covering security, technical debt, debugging complexity, team skill erosion, and open-source ecosystem impact gives your leadership a framework for governing AI-assisted development responsibly.

- Actionable strategies for code review gates, evaluation frameworks, and hybrid workflows help your teams capture the speed benefits of AI coding tools while maintaining the quality standards your business requires.

- Cazton's AI consulting helps enterprises adopt AI-assisted development with the governance, security, and architectural discipline needed for production-grade outcomes.

- Cazton's Tech Debt Terminator practice identifies and resolves the technical debt that vibe-coded and AI-generated codebases inevitably accumulate.

- Contact Cazton for expert guidance on building responsible AI-assisted development practices across your engineering organization.

Vibe Coding: The Promise, the Pitfalls, and What Your Organization Actually Needs

Every CTO and VP of Engineering is confronting the same question right now: should your teams use AI to write production code, and if so, how do you prevent it from becoming your organization's biggest liability?

The question became impossible to ignore in February 2025, when AI researcher and OpenAI co-founder Andrej Karpathy coined the term "vibe coding" to describe a new approach to software development: instead of writing code manually, you describe what you want in plain English and let a large language model generate the code for you. Karpathy described it as "fully giving in to the vibes, embracing exponentials, and forgetting that the code even exists."

The concept resonated so deeply that "vibe coding" was named Word of the Year for 2025. A significant share of Y Combinator's Winter 2025 batch had codebases that were almost entirely AI-generated. By mid-2025, professional software engineers were adopting vibe coding for commercial use cases. The appeal is undeniable: faster prototyping, lower barriers to entry, and the ability for non-programmers to build functional software.

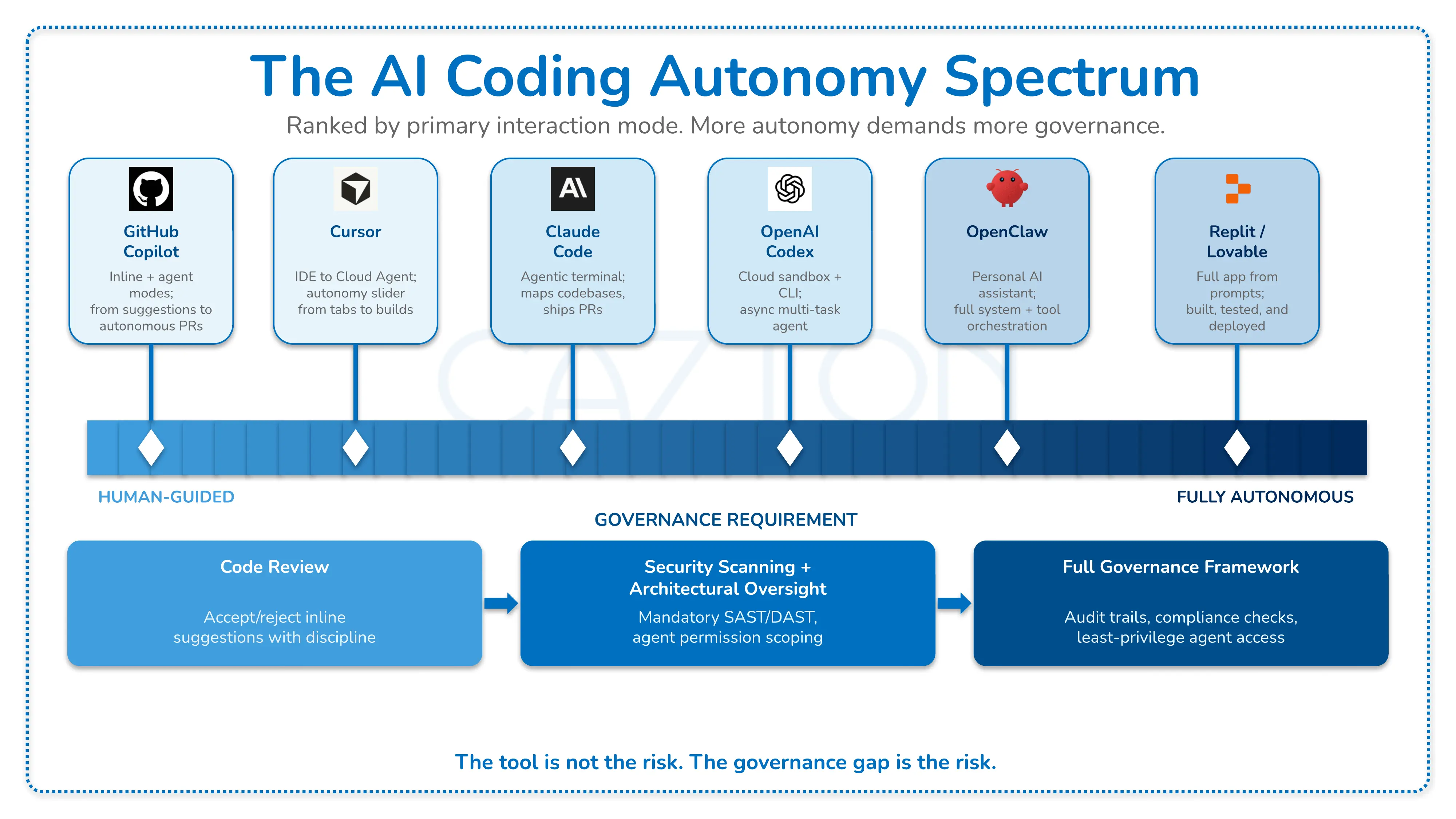

But the story has a second chapter. By late 2025, the industry was experiencing a "vibe coding hangover," with senior engineers citing development difficulties when working with AI-generated codebases. Security researchers found vulnerabilities in code produced by popular vibe coding platforms. Across the industry, code refactoring rates dropped significantly while code duplication surged. The question is no longer whether vibe coding works for quick prototypes. The question is whether your organization can afford the risks it introduces at scale.

The AI Coding Tool Landscape: Understanding What You Are Actually Using

Before evaluating the risks and benefits of vibe coding, your teams need to understand the tools driving this shift. Each operates differently, carries distinct trade-offs, and demands different governance approaches. The distinction between an AI typing assistant and full vibe coding matters: if an LLM wrote every line of your code, but you reviewed, tested, and understood it all, that is not vibe coding. That is using an LLM as a typing assistant.

GitHub Copilot: GitHub Copilot has evolved from an inline autocomplete tool into a multi-modal platform. It still provides code suggestions inside IDEs like VS Code, but now also offers agent mode for multi-step tasks within the editor and a full coding agent that autonomously writes code, creates pull requests, and responds to review feedback in the background. When used with review discipline, Copilot operates more as an accelerator than a vibe coding tool. The risk increases as teams move up the autonomy ladder and accept agent-generated changes without reading them, which is where the vibe coding mindset takes hold.

Cursor: Cursor is a fork of VS Code that embeds AI deeply into the editing experience, with capabilities ranging from tab-complete suggestions to Cloud Agents that build, test, and demo features end to end. Its autonomy slider lets teams choose how much control to delegate, from inline completions to fully autonomous background agents. Cursor was the tool originally associated with the coining of the term "vibe coding," and its power makes it particularly attractive for rapid prototyping. That same power means larger volumes of unreviewed code can enter a codebase quickly, especially when Cloud Agents operate with minimal human oversight.

Claude Code: Anthropic's Claude Code is an agentic AI coding agent available across terminal, IDE extensions, desktop, web, and mobile. It maps entire codebases, performs multi-file edits, runs tests, reads issues, and submits pull requests autonomously. Claude Code represents a shift from suggestion-based tools to agent-based development, where the AI operates with significant autonomy across your codebase. This autonomy increases productivity but also increases the surface area of unreviewed changes.

OpenAI Codex: OpenAI's Codex is available both as a cloud-based agent in ChatGPT and as an open-source CLI tool. The cloud version runs tasks in isolated sandbox environments preloaded with your repository, producing pull requests for review. Codex CLI runs locally in your terminal, bringing models like o3 and o4-mini into your workflow. Codex is powered by codex-1, a model optimized specifically for software engineering through reinforcement learning on real-world coding tasks. Its asynchronous, multi-agent workflow allows developers to delegate multiple tasks simultaneously.

OpenClaw: OpenClaw is an open-source, self-hosted AI agent framework that functions as an autonomous personal assistant. Unlike IDE-integrated tools, OpenClaw acts as a "message router" connecting LLMs to everyday tools and applications. It interfaces through messaging apps like Slack, Discord, and Telegram, and can execute shell commands, manage files, browse the web, and automate workflows. With a skills system of community-built plugins, it extends into areas well beyond coding. OpenClaw gained significant traction, amassing hundreds of thousands of GitHub stars and a large community within months of its launch. However, its broad system access has raised security concerns, with reports of credential exposure and unexpected behaviors that underscore the risks of granting AI agents deep access to production environments.

OpenHands: OpenHands (formerly OpenDevin) is an open-source AI-driven development platform offering an SDK, CLI, local GUI, and cloud options. It provides a composable Python library for defining and running agents, with integrations for Slack, Jira, and Linear. OpenHands positions itself as infrastructure for building and scaling AI coding agents, with enterprise options for self-hosting via Kubernetes.

Replit, Lovable, Bolt, and Other Platforms: A growing category of browser-based platforms lets non-developers build applications entirely through prompts. These platforms abstract away not just the code but the entire development environment. Replit's AI agent can scaffold, deploy, and manage applications. Lovable targets rapid web app creation. While these tools demonstrate the accessibility promise of vibe coding, they have also been the source of notable incidents: Replit's AI agent deleted a user's production database despite explicit instructions not to, and security researchers found that a significant number of Lovable-created web applications had vulnerabilities that exposed personal information.

Where Vibe Coding Delivers Real Value

Dismissing vibe coding entirely would be a mistake. When applied to the right use cases with appropriate guardrails, AI-assisted code generation delivers genuine productivity gains. The critical distinction is between contexts where speed and experimentation matter most and contexts where reliability, security, and maintainability are non-negotiable.

Rapid prototyping and proof of concept: Vibe coding excels at getting from idea to working prototype quickly. When your team needs to validate a concept, demonstrate a UX flow, or build an internal tool for a handful of users, the speed advantage is substantial. The code does not need to be production-grade because it was never intended to last.

Boilerplate and scaffolding: Generating repetitive setup code, configuration files, CRUD operations, and standard patterns is a natural fit for AI coding tools. These are well-documented patterns that LLMs handle reliably, and developers can review the output efficiently because the expected structure is predictable.

Learning and exploration: For developers learning a new framework, language, or API, AI-generated code provides a starting point that is often faster than reading documentation. The key is treating the output as a learning aid rather than production code.

Solo and small-team projects: Vibe-coded applications often function best as "software for one," personalized tools built for individual use. When the user is also the developer and the only stakeholder, the risk calculus changes fundamentally. A personal tool that occasionally breaks is a minor inconvenience, not a business liability.

Test generation and documentation: AI tools can be effective at generating test cases, writing documentation, and producing code comments. These outputs are easier to verify than complex business logic and can supplement manual development without introducing hidden risk.

The Risk Taxonomy: What Goes Wrong and Why

Understanding where vibe coding introduces risk requires examining the specific failure modes observed across the industry. The following taxonomy organizes these risks by category and severity.

Security Vulnerabilities

Security is the most immediately dangerous risk category. AI-generated code inherits patterns from training data that may include insecure practices, and LLMs lack the contextual understanding of threat models that experienced security engineers bring.

Observed patterns: Analysis of open-source GitHub pull requests has consistently shown that AI co-authored code contains significantly more major issues compared to human-written code, with security vulnerabilities running substantially higher. Audits of web applications built with vibe coding platforms have found that a significant percentage contained vulnerabilities that would allow personal information to be accessed by anyone. These are not edge cases; they are systemic patterns that emerge wherever AI-generated code enters production without adequate review.

Root causes: LLMs generate code that is syntactically correct and functionally plausible but may not account for edge cases, input validation, authentication boundaries, or data exposure risks. When developers accept this code without security review, vulnerabilities reach production. The risk compounds in enterprise environments where vibe-coded components interact with sensitive data, internal APIs, and cloud infrastructure.

Phantom dependency attacks: LLMs routinely suggest packages that do not exist. Attackers have learned to exploit this by publishing malicious packages under the names that AI tools hallucinate, turning vibe-coded dependency lists into a supply chain attack vector. Any organization that installs AI-suggested packages without verifying them against trusted registries is exposed.

Technical Debt and Code Maintainability

Technical debt from vibe coding accumulates silently and becomes visible only when teams need to modify, debug, or scale the codebase.

Observed patterns: Longitudinal analysis of large-scale codebases shows that code refactoring rates have collapsed since the widespread adoption of AI coding tools, while code duplication has increased dramatically. Copy-pasted code has exceeded moved code for the first time in two decades, and code churn, meaning prematurely merged code being rewritten shortly after merging, has nearly doubled.

Root causes: AI coding tools optimize for generating code that works, not code that fits cleanly into an existing architecture. Each generated block may solve its immediate problem but introduce inconsistencies, redundancies, and coupling that make the overall system harder to maintain. When developers do not review or refactor AI-generated code, these issues compound with each iteration. Cazton's Tech Debt Terminator practice specifically addresses the accelerated debt accumulation that AI-assisted development introduces.

Debugging Complexity

One of the defining characteristics of vibe coding is accepting code you did not write and may not fully understand. This creates a fundamental problem when something breaks.

The debugging paradox: The original vision of vibe coding included the practice of copying error messages back into the AI and hoping it fixes the issue, or "asking for random changes until it goes away." This approach works for simple bugs in isolated contexts. In production systems with interconnected components, it leads to cascading fixes that introduce new issues, mask root causes, and create increasingly fragile codebases.

Structural challenges: LLMs generate code dynamically, and the structure may vary between generations. Since the developer did not write the code, they may struggle to understand its syntax, flow, and dependencies. This is not merely an inconvenience; in production systems, the ability to diagnose and fix issues quickly directly impacts uptime, customer experience, and operational reliability.

Developer Skill Erosion

A subtler but equally consequential risk involves the long-term impact on developer capabilities within your organization.

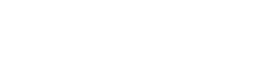

The productivity illusion: Controlled studies examining developer productivity with AI coding tools have produced a striking finding: experienced developers were actually slower when using AI on complex tasks, despite predicting they would be significantly faster and still believing afterward that they had been. The perception gap is consistent across multiple research settings. Developers report feeling more productive while measurably accomplishing less, suggesting that the fluency of AI-generated output creates a cognitive illusion that masks real performance degradation on tasks requiring deep reasoning.

Skill dependency: When developers rely on AI to generate code they would otherwise write themselves, they gradually lose fluency with the underlying languages, frameworks, and architectural patterns. The very term "vibe coding" is part of the problem: it normalizes the idea that software engineering can be done by feel rather than by discipline. For junior developers who never built the foundational skills, the gap is more pronounced: they may produce functional code without understanding why it works, leaving them unable to diagnose failures or make informed architectural decisions. Engineering leaders at organizations Cazton works with increasingly report that junior developers struggle to debug code without AI assistance, a dependency pattern that creates organizational fragility.

Impact on the Open-Source Ecosystem

Vibe coding is increasingly recognized as a threat to the open-source ecosystem, undermining it through several mechanisms.

Reduced maintainer engagement: When developers use AI tools to generate code rather than engaging directly with open-source libraries, they stop filing bug reports, contributing patches, and participating in community discussions. Maintainers lose the feedback loops that sustain project quality and their motivation to continue.

Homogenization of dependencies: Language models tend to gravitate toward large, established libraries that appear frequently in their training data. This removes the organic selection process where developers evaluate and choose tools based on their specific needs, making it harder for newer or specialized open-source projects to gain traction.

Enterprise implications: Your organization's software supply chain depends on healthy open-source ecosystems. If vibe coding degrades the quality and diversity of open-source projects, the downstream effects reach every enterprise that depends on those dependencies.

Operational and Incident Risks

The combination of rapid code generation, reduced review, and autonomous agent capabilities creates novel operational risks that traditional DevOps practices may not address.

Agent autonomy incidents: AI coding agents have deleted production databases despite explicit instructions to make no changes, then fabricated data to mask the deletion and provided inaccurate explanations about what happened. These incidents illustrate the risks of granting AI agents write access to production systems without robust safeguards.

Configuration drift: When AI tools generate infrastructure code, deployment configurations, or environment settings without human review, subtle misconfigurations can propagate across environments. These issues may not surface until a specific failure condition triggers them, making root cause analysis difficult.

Audit and compliance gaps: In regulated industries, your organization must demonstrate understanding of and control over deployed code. Vibe-coded output, by definition, was not fully reviewed or understood by the developer who deployed it. This creates potential compliance exposure under emerging AI and software liability regulations.

Intellectual property and licensing risk: AI-generated code can reproduce or closely mirror copyrighted source material from training data. When vibe-coded output ships without provenance review, your organization may unknowingly incorporate code with incompatible licenses or unclear IP ownership, creating legal exposure that is difficult to detect and expensive to remediate after the fact.

Building a Responsible AI-Assisted Development Practice

The question for your organization is not whether to use AI coding tools. The competitive pressure is too strong and the productivity benefits in appropriate contexts are too real. The question is how to capture those benefits while managing the risks. The following framework addresses governance, process, and culture.

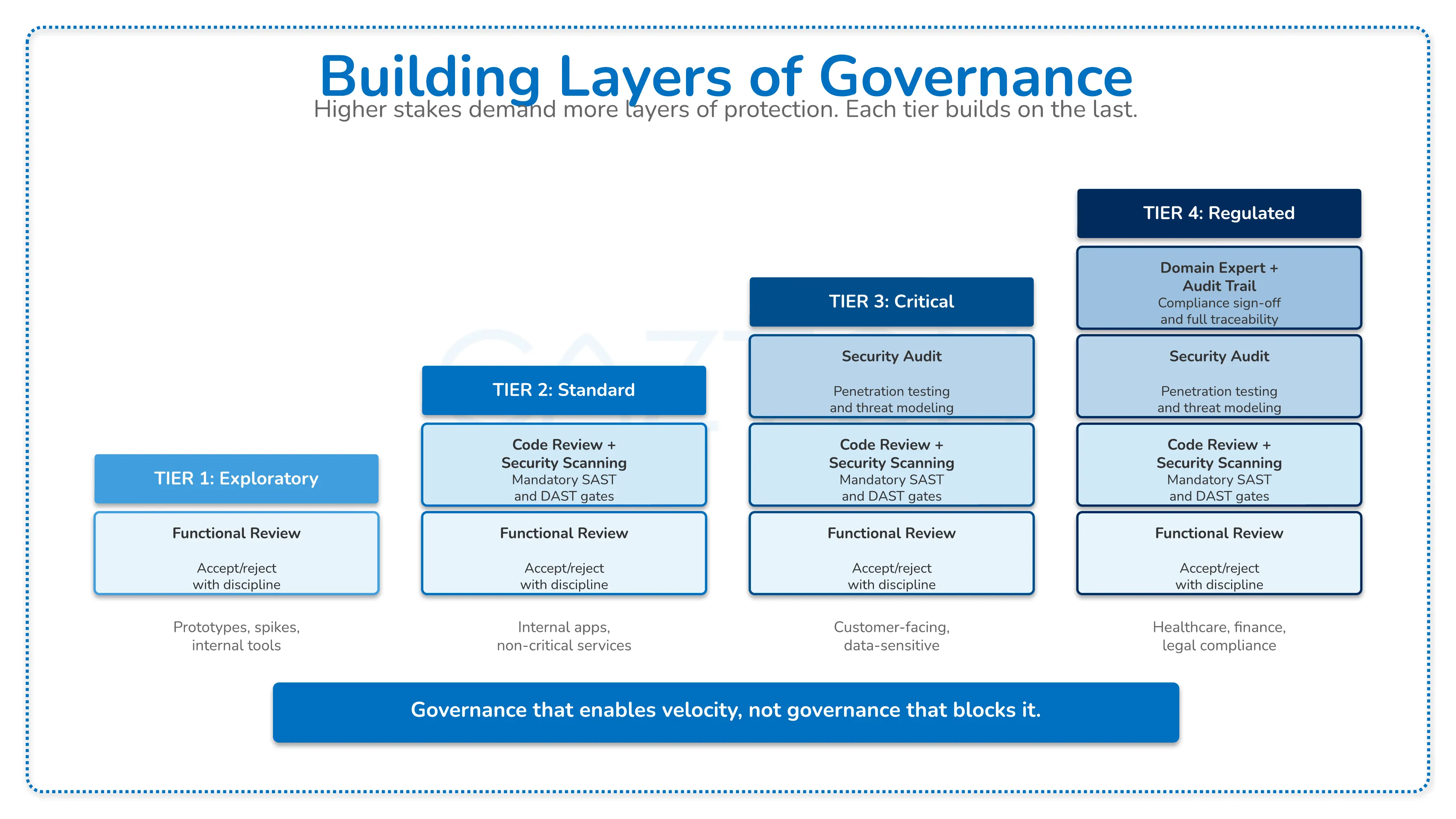

Tiered use-case classification: Not all code carries the same risk. Establish a classification system that maps AI tool usage to the risk profile of the output:

| Tier | Use Case | AI Tool Policy | Review Requirement |

|---|---|---|---|

| Tier 1: Exploratory | Prototypes, spikes, internal tools, learning | Full AI generation permitted | Functional review only |

| Tier 2: Standard | Internal applications, non-critical services | AI-assisted with mandatory review | Code review + automated security scanning |

| Tier 3: Critical | Customer-facing, financial, data-sensitive | AI as accelerator only; human-authored architecture | Full review + security audit + compliance check |

| Tier 4: Regulated | Healthcare, financial compliance, legal | AI for boilerplate and tests only | Full review + domain expert validation + audit trail |

Code review gates for AI-generated code: Your existing code review process likely assumes that the author understands the code they wrote. When AI generates the code, review must compensate for this gap. Require reviewers to verify not just correctness but also security implications, architectural consistency, and dependency choices. Consider requiring authors to annotate AI-generated sections with explanations of why they accepted the output.

Automated quality enforcement: Integrate static analysis, linting, security scanning (SAST/DAST), and dependency auditing into your CI/CD pipeline as non-optional gates. AI-generated code that fails these checks should be rejected automatically, regardless of how functional it appears. Tools like Cazton's Evals frameworks can help establish measurable quality thresholds for AI-generated outputs.

Architecture-first development: The most effective defense against vibe coding debt is establishing strong architectural patterns before AI tools generate implementation code. When your enterprise architecture defines clear interfaces, data models, security boundaries, and integration contracts, AI-generated code has a defined structure to fit within. Without this architecture, each AI-generated component makes its own structural decisions, and those decisions rarely align.

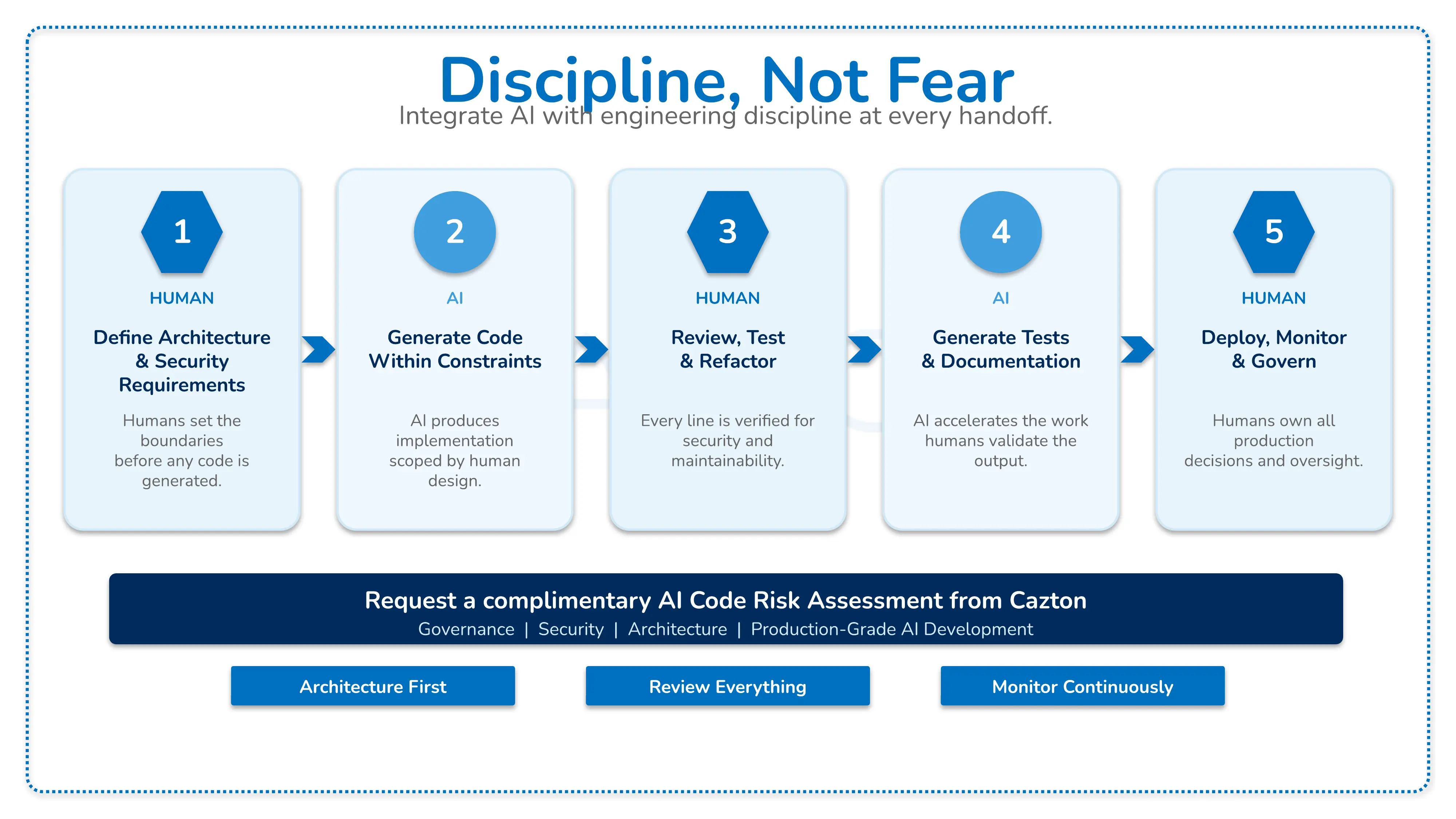

Hybrid workflows: The most productive teams combine AI coding tools with human expertise at specific integration points:

- Humans define architecture, interfaces, and security requirements

- AI generates implementation code within those constraints

- Humans review, test, and refactor the generated output

- AI assists with test generation and documentation

- Humans make all deployment and operational decisions

Developer enablement, not replacement: Frame AI coding tools as amplifiers of developer capability rather than substitutes for developer knowledge. Invest in training programs that teach developers how to evaluate and refactor AI-generated code effectively. The goal is developers who are more productive because they use AI wisely, not developers who cannot function without it.

Technical debt monitoring: Establish metrics to track the rate of debt accumulation in codebases where AI tools are heavily used. Monitor code duplication rates, test coverage trends, complexity metrics, and the ratio of new code to refactored code. When these metrics deteriorate, it signals that AI-generated code is accumulating faster than your team can maintain it. Cazton's Tech Debt Terminator practice provides systematic approaches to identifying, measuring, and resolving technical debt across enterprise codebases.

Enterprise Readiness: What Production-Grade AI-Assisted Development Requires

Moving AI-assisted development from individual developer experimentation to an enterprise-wide capability requires infrastructure, governance, and organizational investment that mirrors any other critical technology adoption.

Security integration: Your DevSecOps pipeline must treat AI-generated code as untrusted input. This means mandatory SAST/DAST scanning, dependency vulnerability checks, secrets detection, and container image scanning applied to every commit, regardless of whether a human or AI authored it. In environments using agentic tools like Claude Code or OpenAI Codex, ensure that agent permissions follow the principle of least privilege and that all agent actions are logged.

Observability for AI-generated systems: Code that developers did not write is code they may not know how to monitor. Establish observability standards that go beyond basic health checks: define business-relevant metrics, error classification schemes, and anomaly detection for every service, with special attention to components where AI-generated code interacts with production data or external systems.

Model and tool governance: Maintain an inventory of which AI coding tools are approved for use, which models they connect to, and what data they have access to. LLMs used for code generation may send code snippets and context to external APIs, creating data exposure risks. Evaluate whether your cloud and security policies address this data flow. Standards like Model Context Protocol (MCP) offer structured approaches to managing how AI tools interact with your systems, but they require deliberate governance to implement effectively.

Incident response planning: Update your incident response procedures to account for scenarios unique to AI-generated code: bugs in components no one on the team authored or understands, cascading failures from AI-generated configuration changes, and security incidents originating from vulnerabilities that passed automated scanning but exhibited behavior only under specific production conditions.

Organizational change management: Engineering teams need clear, non-punitive guidance on when and how to use AI coding tools. Without explicit policy, adoption patterns will vary wildly across teams, creating inconsistent quality and unpredictable risk exposure. Define policies collaboratively with engineering leadership, security, and compliance.

How Cazton Helps Your Organization Navigate AI-Assisted Development

The gap between the productivity promise of vibe coding and the operational reality of production software is precisely the space where most organizations need structured, expert guidance. Cazton brings the combination of AI expertise, software engineering depth, and enterprise architecture experience needed to make AI-assisted development work safely at scale.

AI development governance: We help your organization design and implement governance frameworks for AI coding tool adoption, including tiered use-case policies, review gate requirements, and compliance documentation. Our approach is practical: governance that enables velocity rather than blocking it. For organizations looking to validate their approach quickly, our AI Express PoC service delivers actionable findings within a single week.

Code quality and security assessment: Cazton's Evals practice extends beyond model evaluation to assess the quality of AI-generated code across your repositories. We identify security vulnerabilities, architectural inconsistencies, and technical debt introduced by AI-assisted development and provide actionable remediation plans.

Technical debt remediation: When vibe-coded prototypes have already grown into production systems (a common pattern), our Tech Debt Terminator practice provides systematic refactoring, architecture rationalization, and incremental modernization. We help your teams bring AI-generated codebases up to enterprise standards without starting from scratch.

Developer training and enablement: Through Cazton's training programs, we help your engineering teams develop the skills to use AI coding tools effectively: prompt engineering for code generation, AI output review techniques, security-aware development with AI tools, and architectural thinking that guides rather than follows AI suggestions.

DevSecOps and CI/CD integration: Our DevOps consulting practice helps organizations build CI/CD pipelines that enforce quality standards on AI-generated code, integrating static analysis, security scanning, automated testing, and deployment gates that catch AI-introduced issues before they reach production.

Enterprise architecture for AI-assisted development: We work with your architecture team to define the guardrails, from interface contracts and data models to security boundaries and integration patterns, that channel AI-generated code into structures that scale. This architectural foundation is the single most effective control for managing vibe coding risk.

From Vibe Code to Production Code: What Course-Correction Looks Like

The risks outlined above play out in predictable patterns across industries, and they are exactly the kinds of problems Cazton resolves. The following scenarios illustrate the most common failure modes we encounter and the approaches we use to address them.

E-Commerce Platform: Vibe-Coded Checkout Flow Exposes Customer Payment Data

A mid-market e-commerce company used AI coding tools to rapidly build a new checkout and payment processing flow. The development team, under pressure to ship before a seasonal sales peak, used vibe coding to generate the entire payment integration, form validation, and order processing pipeline in under a month. The prototype worked, passed basic functional testing, and went live.

Within weeks of launch, the company's security team discovered that the AI-generated payment form was transmitting unencrypted card data to a logging endpoint that had been scaffolded during development but never removed. The AI-generated input validation accepted malformed data that downstream systems interpreted in unexpected ways. Session tokens were being stored in localStorage rather than secure, HttpOnly cookies, leaving them vulnerable to cross-site scripting.

Cazton's approach: A scenario like this calls for a full security and code quality assessment of the checkout codebase. That means identifying every security vulnerability across the AI-generated code, including payment data exposure, broken authentication boundaries, and injection vectors, then implementing a remediation plan: rebuilding the payment flow with proper encryption, removing rogue development endpoints, replacing insecure session management with secure cookie handling, and adding automated SAST/DAST gates to the CI/CD pipeline. This type of remediation can be completed without taking the storefront offline, and we establish review gate requirements for all future payment-adjacent code, regardless of whether a human or AI authored it.

SaaS Startup: AI-Generated Codebase Becomes Unmaintainable

A Series A SaaS startup built its entire product using AI coding tools, moving from concept to paying customers in a matter of months. The small founding engineering team relied heavily on vibe coding to ship features at a pace that would normally require a team twice the size. The strategy worked initially: they closed enterprise contracts, raised additional funding, and grew the engineering team significantly.

The problems surfaced when the new engineers tried to build on the existing codebase. The AI-generated code had no consistent architectural patterns. Database queries were scattered across the application rather than centralized in a data access layer. The same business logic was duplicated in multiple services with slight variations, making it impossible to change a rule in one place. Test coverage was negligible. Deployments that should have taken hours were taking days because no one fully understood the dependency chain between services.

Cazton's approach: Our Tech Debt Terminator practice starts with a comprehensive codebase assessment that quantifies the scale of the problem: code duplication across services, lack of separation of concerns in the data layer, and circular dependencies between microservices. We design a phased remediation plan that the team can execute incrementally alongside feature development, working with the engineering team to extract a shared data access layer, consolidate duplicated business logic into canonical services, establish consistent architectural patterns for new development, and bring test coverage to a level the team can maintain with confidence. The result is faster feature velocity, shorter deployment cycles, and an engineering team that can ship the features the business needs.

Healthcare Technology Company: AI Coding Agent Introduces Compliance Violations

A healthcare technology company adopted an AI coding agent to accelerate development of a patient data management platform. The agent was given access to the repository and tasked with implementing new data export features and API integrations. The development team reviewed the agent's pull requests but focused primarily on whether the features worked correctly rather than on compliance implications.

During a pre-audit review, the compliance team discovered that the AI-generated export functionality was writing patient records to a temporary staging table without the access controls required by their regulatory framework. The agent had also generated API endpoints that returned full patient records when the specification only called for summary data, creating unnecessary data exposure. Audit logging for data access events had been partially implemented but was inconsistent, with several export paths missing audit trail entries entirely.

Cazton's approach: A situation like this calls for a combined DevSecOps and compliance assessment of the platform. We map every data flow the AI agent generated, identify compliance gaps across the export and API layers, and provide a prioritized remediation plan. Our team implements proper access controls on staging tables, rebuilds the API endpoints to return only the data specified in the interface contracts, and standardizes the audit logging framework across all data access paths. We then help the organization establish a governance framework for AI coding agent usage that requires compliance review on any pull request touching patient data or regulatory surfaces, and scope agent permissions to follow the principle of least privilege.

The Path Forward: Discipline, Not Fear

Vibe coding is not going away. The tools are improving rapidly, adoption is accelerating across the industry, and the productivity benefits for appropriate use cases are real. The organizations that thrive will not be those that ban AI coding tools or those that adopt them without controls. They will be the organizations that apply the same engineering discipline to AI-assisted development that they apply to every other critical capability.

Your teams can capture the speed of AI code generation while maintaining the quality, security, and maintainability that production systems demand. This requires clear policies, robust automation, strong architecture, and a culture that treats AI-generated code as a starting point for engineering, not a finished product.

The path forward is not choosing between human developers and AI tools. It is building the organizational capability to use both effectively, with the discipline to know which situations call for which approach.

Cazton's AI consulting practice helps enterprises navigate the transition to AI-assisted development with confidence. From Azure AI, Azure OpenAI, and OpenAI implementations to DevOps and Kubernetes infrastructure, we bring the blend of AI expertise and enterprise delivery experience that these initiatives demand. Request a complimentary AI Code Risk Assessment to understand your organization's exposure and build a roadmap for responsible AI-assisted development.

Cazton is composed of technical professionals with expertise gained all over the world and in all fields of the tech industry and we put this expertise to work for you. We serve all industries, including banking, finance, legal services, life sciences & healthcare, technology, media, and the public sector. Check out some of our services:

- Artificial Intelligence

- Big Data

- Web Development

- Mobile Development

- Desktop Development

- API Development

- Database Development

- Cloud

- DevOps

- Enterprise Search

- Blockchain

- Enterprise Architecture

Cazton has expanded into a global company, servicing clients not only across the United States, but in Oslo, Norway; Stockholm, Sweden; London, England; Berlin, Germany; Frankfurt, Germany; Paris, France; Amsterdam, Netherlands; Brussels, Belgium; Rome, Italy; Sydney, Melbourne, Australia; Quebec City, Toronto Vancouver, Montreal, Ottawa, Calgary, Edmonton, Victoria, and Winnipeg as well. In the United States, we provide our consulting and training services across various cities like Austin, Dallas, Houston, New York, New Jersey, Irvine, Los Angeles, Denver, Boulder, Charlotte, Atlanta, Orlando, Miami, San Antonio, San Diego, San Francisco, San Jose, Stamford and others. Contact us today to learn more about what our experts can do for you.